Don’t Believe Everything You See: A Discussion of Deepfake by Sarah Littman with Lisa Krok

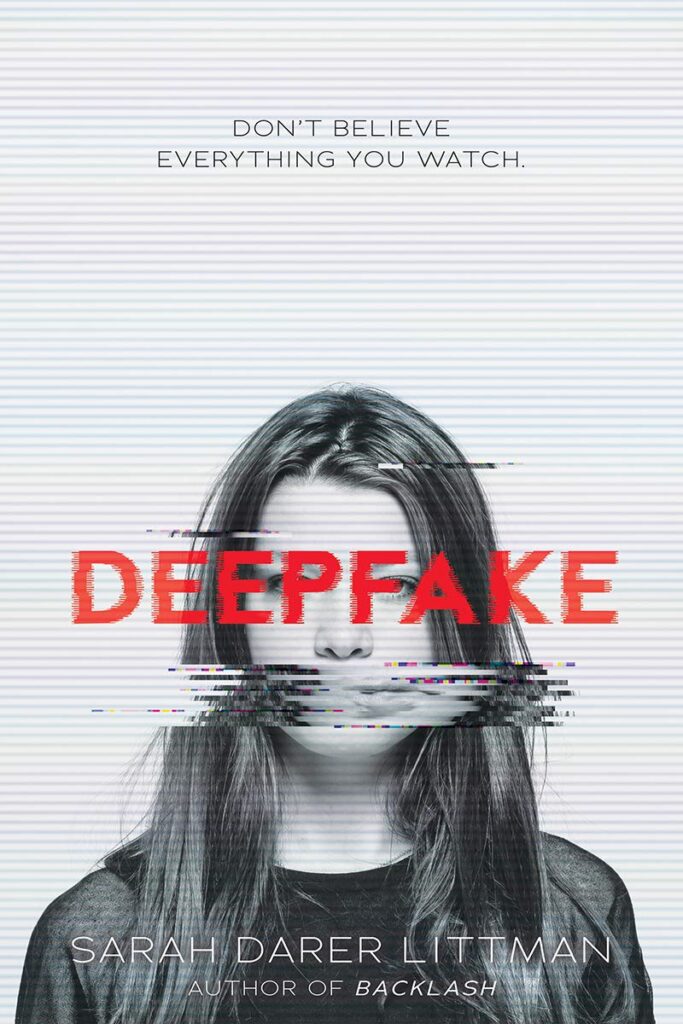

Having seen some deepfake videos, I was curious to read Deepfake by Sarah Darer Littman. This book is a fictionalized account of how this synthetic media can have drastic consequences.

First, what exactly is a deepfake? The term itself comes from a combination of “deep learning” and “fake”. Deepfakes are AI (artificial intelligence) generated media where someone’s likeness can be swapped with another, or manipulated with the intent or likelihood of being deceptive about the recorded person’s words or actions. A creator of this would first need to train a neural network to understand what the person looks like in different lighting and angles. This can be constructed by using many hours of real video footage to make a realistic deepfake video. This process was invented by Ian Goodfellow, a Ph. D. student in 2014. Popular Mechanics reports that he now works at Apple.

ADVERTISEMENT

ADVERTISEMENT

In the novel, seniors Dara and Will are not only competing for valedictorian, but they have also been dating on the sly. When a video posts to the school’s gossip site, Rumor Has It, Will is stunned to see Dara accusing him of paying someone to take the SAT for him. Feeling betrayed and falsely maligned, he breaks up with Dara and is facing an investigation that could rescind his college acceptance. Here’s the catch: Dara knows she did not say those things or share that video. Leave it to this valedictorian candidate to scrutinize the video and surrounding evidence to discover what is really going on. This disturbing tale grips readers, who will be turning pages to find out how, why, and who is responsible for this.

According to a recent report from University College London,“Deepfakes are the most dangerous form of crime through artificial intelligence…This is because while deepfake detectors require training through hundreds of videos and must be victorious in every instance, malicious individuals only have to be successful once”. This leads to the question of the legality of these videos. Clearly, spreading misinformation via this manipulated media is very concerning. Anything pornographic is subject to defamation or copyright suits, but deepfakes with deceitful or controversial statements that were never said currently remain legal.

Tips to spot a deep fake from MIT’s Detect Fakes project:

(retrieved from https://www.media.mit.edu/projects/detect-fakes/overview/)

“The Detect Fakes experiment offers the opportunity to learn more about DeepFakes and see how well you can discern real from fake. When it comes to AI-manipulated media, there’s no single tell-tale sign of how to spot a fake. Nonetheless, there are several DeepFake artifacts that you can be on the look-out for.

- Pay attention to the face. High-end DeepFake manipulations are almost always facial transformations.

- Pay attention to the cheeks and forehead. Does the skin appear too smooth or too wrinkly? Is the agedness of the skin similar to the agedness of the hair and eyes? DeepFakes are often incongruent on some dimensions.

- Pay attention to the eyes and eyebrows. Do shadows appear in places that you would expect? DeepFakes often fail to fully represent the natural physics of a scene.

- Pay attention to the glasses. Is there any glare? Is there too much glare? Does the angle of the glare change when the person moves? Once again, DeepFakes often fail to fully represent the natural physics of lighting.

- Pay attention to the facial hair or lack thereof. Does this facial hair look real? DeepFakes might add or remove a mustache, sideburns, or beard. But, DeepFakes often fail to make facial hair transformations fully natural.

- Pay attention to facial moles. Does the mole look real?

- Pay attention to blinking. Does the person blink enough or too much?

- Pay attention to the size and color of the lips. Does the size and color match the rest of the person’s face?

These eight questions are intended to help guide people looking through DeepFakes. High-quality DeepFakes are not easy to discern, but with practice, people can build intuition for identifying what is fake and what is real. You can practice trying to detect DeepFakes at Detect Fakes.”

Creating deepfakes is surprisingly easy with the right app/software, and can be created for fun or learning purposes, rather than used fraudulently. Here are some examples:

Bill Hader Pacino Schwarzenegger Deepfake

Home Alone “Home Stallone” Deepfake

If you would like to try making a fun video of your own, check out these apps and websites:

Best Deepfake Apps and Websites

There is a teaching guide for this book available here: https://sarahdarerlittman.com/teacherreading_guides/deepfake_guide_-copy.pdf

Meet Librarian Lisa Krok

Lisa Krok, MLIS, MEd, is the adult and teen services manager at Morley Library and a former teacher in the Cleveland, Ohio area. She is the author of Novels in Verse for Teens: A Guidebook with Activities for Teachers and Librarians. Lisa’s passion is reaching marginalized teens and reluctant readers through young adult literature. She recently concluded a term on the Best Fiction for Young Adults committee (BFYA 2021), and also served two years on the Quick Picks for Reluctant Reader’s team. Lisa can be found being bookish and political on Twitter @readonthebeach.

Filed under: Information Literacy

About Karen Jensen, MLS

Karen Jensen has been a Teen Services Librarian for almost 30 years. She created TLT in 2011 and is the co-editor of The Whole Library Handbook: Teen Services with Heather Booth (ALA Editions, 2014).

ADVERTISEMENT

ADVERTISEMENT

SLJ Blog Network

One Star Review, Guess Who? (#202)

This Q&A is Going Exactly As Planned: A Talk with Tao Nyeu About Her Latest Book

Exclusive: Giant Magical Otters Invade New Hex Vet Graphic Novel | News

Parsing Religion in Public Schools

ADVERTISEMENT